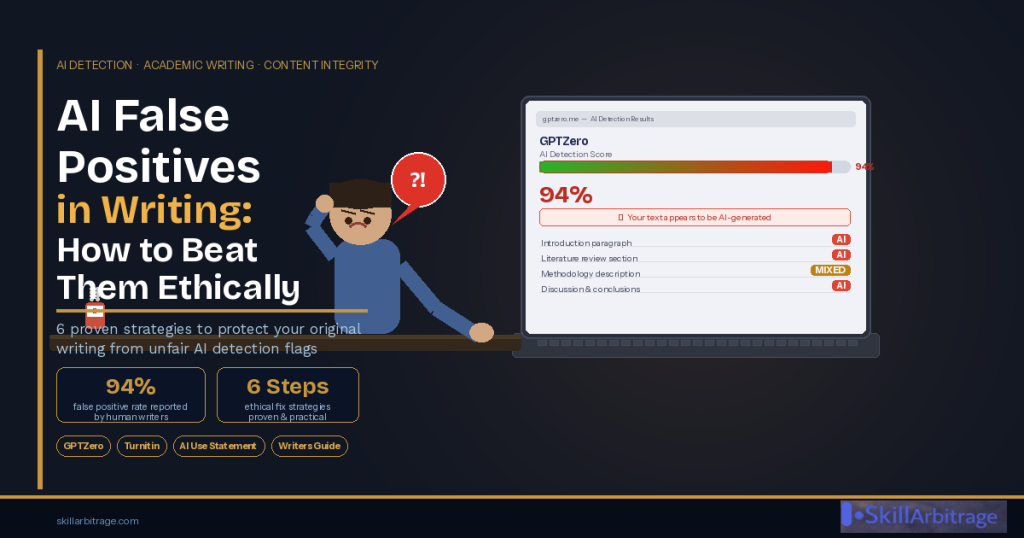

You spent hours researching. You wrote every sentence yourself. You submitted your work with confidence and then received a note saying it had been flagged as largely AI-generated. If this has happened to you, you are not alone, and you are not the problem.

AI false positives in writing are now one of the most widespread and damaging issues facing academic writers, journalists, content creators, and essayists worldwide. In this guide, you will learn exactly why AI detection tools produce false results, six concrete ethical strategies to reduce your risk of being wrongly flagged, and a step-by-step template for writing an AI-use statement that satisfies even the strictest journal requirements.

Table of Contents

What Are AI False Positives in Writing — and Why Are They Happening?

An AI false positive occurs when an AI detection tool classifies human written content as AI-generated. This is not a rare edge case it is a systematic problem built into how detection technology works.

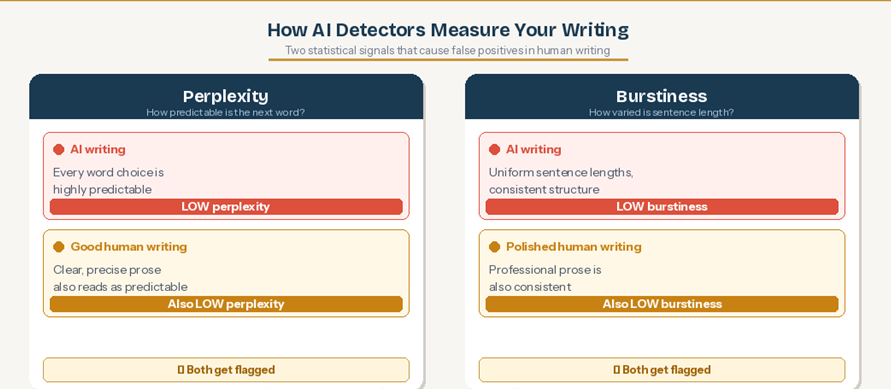

Most detection tools including GPTZero, Originality.ai, and Turnitin’s AI detector do not evaluate writing the way a human reader would. They do not assess argument quality, originality of thought, or depth of knowledge. Instead, they analyse two statistical properties of text:

Perplexity — How Predictable Is the Next Word?

Perplexity measures how expected each word is given the words before it. AI-generated text tends to be highly predictable it chooses statistically likely word sequences. Human writing tends to be less predictable, using unexpected word choices, idiomatic expressions, and field-specific terminology.

Here is the problem: if you have trained yourself to write clearly, precisely, and professionally the way good academic writers do your text may also show low perplexity. The more coherent and well-structured your prose is, the more it can resemble AI output to a statistical model.

Burstiness — How Much Does Sentence Structure Vary?

Burstiness measures variation in sentence length and structure. Human writing is typically ‘bursty’ we mix long complex sentences with short punchy ones. AI writing tends to be more uniform.

But again, if your writing style is already polished and consistent as it should be for academic publication burstiness may be lower than average, triggering a false positive. The detector cannot distinguish between AI uniformity and human polish.

The Standardisation Problem

There is a third, systemic cause that rarely gets discussed. As more people write with AI assistance, edit with AI, and learn writing through AI-aided feedback, a globally standardised writing style is emerging. It is characterised by shorter sentences, cleaner structure, and minimal grammatical irregularities.

These traits are now wrongly associated with AI output. The more you write like a skilled professional, the more you risk being flagged. The irony is sharp: improvement in writing quality is being penalised by tools designed to detect low-quality automation.

“Ironically, the more we write like good writers, the more we risk being flagged. The very traits that indicate mastery ,clarity, precision, consistency are being misread as machine output.”

Why AI False Positives Are a Serious Professional Problem

Being wrongly flagged is not just an inconvenience. For academic writers in particular, the consequences can be severe.

Journals and publications operate under AI use policies most do not impose a blanket ban on AI, but they require disclosure of how and when it was used. If you have not used AI to write your article, there is nothing to disclose. But a faulty detector score can lead an editor to believe you violated the policy. That misunderstanding alone can raise integrity flags, delay publication, or result in outright rejection all for something you did not do.

Unlike plagiarism tools, AI detectors do not show verifiable sources. They produce only a probability score, with no clear rationale for their classification and no standard appeal process. You may know the flag is wrong. Proving it to an editor is a different challenge entirely.

6 Ethical Strategies to Reduce AI False Positives in Your Writing

There is no single fix that works in every situation detection tools vary, journals vary, and writing contexts vary. But the following six strategies, applied consistently, will significantly reduce your risk of being wrongly flagged while keeping your writing fully original and compliant.

Strategy 1 — Use AI as a Research Assistant, Not a Ghostwriter

The clearest line between ethical and problematic AI use is this: AI should inform your thinking, not replace it. When using tools like ChatGPT or Claude, restrict their role to the research and ideation phase:

- Ask it to list recent studies or key findings in a domain

- Use it to explain a difficult concept in simpler terms before you write about it in your own words

- Ask it to suggest possible structures or subheadings

- Instruct it to identify contrasting viewpoints you should address

When it comes to actual writing, every sentence should come from your own analytical judgment and original phrasing. This single practice will drastically reduce the statistical similarity between your output and AI-generated text because your word choices, reasoning patterns, and sentence constructions will be genuinely yours.

Strategy 2 — Write the First Draft Entirely From Scratch

Write your first draft the way you naturally would using your own logic, tone, and sentence flow, without any AI involvement at all. Once that first version exists, you can use AI tools in a limited, supportive role:

- Identify logical gaps or coherence problems

- Suggest improvements to clarity in specific sentences

- Flag jargon or unnecessary wordiness

This approach keeps intellectual and creative ownership firmly with you. The structure, the argument, and the voice are established before any AI tool touches the work. AI then acts as an editor, not a co-author and the statistical fingerprint of your original draft carries through to the final version.

Strategy 3 — Do Not Over Polish Your Writing With AI

One of the most common causes of false positives is excessive use of AI editing tools Grammarly, QuillBot, ChatGPT, or Claude to rewrite and refine until the prose feels ‘perfect’. The result is often robotic sentence structure, low burstiness, and over-clean phrasing that reliably triggers detection tools.

When using any AI-based editing tool, apply a simple discipline:

- Accept only 30–40% of suggestions choose the ones that genuinely improve clarity without changing your voice

- Preserve your natural tone, rhythm, and phrasing even when alternatives are offered

- Deliberately vary your sentence lengths and transitions do not let a tool homogenise them

- Keep active voice, personal turns of phrase, and domain specific idioms these are your human fingerprints

The goal is not to produce the most polished possible prose. It is to produce writing that is clear, credible, and authentically yours. Some roughness is a feature, not a bug.

Strategy 4 — Check Your Own Work Before Submission

Before submitting to any journal or client, run your work through the same detection tool they are likely to use or a comparable one. GPTZero, Originality.ai, and Copyleaks all offer free or low-cost checks. This gives you advance warning of any passages that are likely to trigger a false positive.

If you find flagged sections:

- Rework those passages without compromising the substance — change sentence order, vary structure, add a direct quote or citation

- Increase sentence length variation in those sections

- Add a specific example, observation, or data point that grounds the prose in something genuinely concrete

This is not gaming the system. Detectors are imperfect tools. Checking your own work before submission is the same discipline as proofreading for grammar you are preventing a technical error from misrepresenting your work.

Strategy 5 — Document Your Writing Process

For high-stakes submissions journal papers, commissioned research, long-form editorial work keep a contemporaneous record of your process. This does not need to be elaborate. A shared Google Doc or Notion page with timestamped drafts, research notes, and any AI prompts you used (if any) is sufficient.

This documentation serves as proof of originality and intent if your work is ever questioned. Google Docs automatically preserves version history. Notion timestamps every edit. These records show that your work evolved through genuine drafting and revision a pattern that AI-generated content simply cannot replicate.

Strategy 6 — Disclose AI Usage Honestly and Precisely

If your journal, client, or institution requires an AI-use statement, write one. Do not avoid it and do not overstate your AI use in an attempt to appear compliant. The statement should be accurate: describing exactly what AI was used for, at what stage, and how you verified or replaced its output.

Being transparent about legitimate AI assistance earns trust and aligns you with the direction the publishing industry is moving. Nature, IEEE, Springer, Wiley, Taylor and Francis, and most other major publishers now require AI-use disclosure as standard. Writing a clear, honest statement is a professional skill that academic writers increasingly need to master.

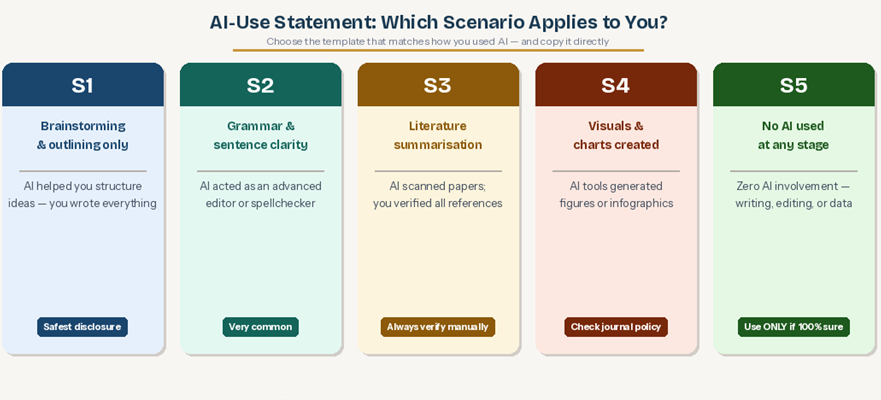

How to Write an AI-Use Statement — 5 Scenarios With Templates

AI-use statements are now a standard requirement across academic publishing. Here is a practical guide covering the most common scenarios you are likely to encounter.

Key Principles Before You Write

- Be specific — name the stage at which AI was used (ideation, summarisation, editing, etc.)

- Name the tool — ChatGPT, Grammarly, Scite.ai, Claude, or whichever tool you used

- Confirm human oversight — journals want to see that you maintained control over the final content and verified AI-produced material

- Align with the journal’s policy — if the journal provides prescribed language or a required format, use it

Scenario 1: AI Used Only for Brainstorming or Outlining

Template:

“AI tools (e.g., ChatGPT) were used only to generate initial outlines and to explore alternative ways of structuring the content. All writing, analysis, and editing were performed independently by the author.”

Use this when AI helped you think through structure but had no role in producing the actual text. This is the most conservative disclosure it signals AI-as-mind-map, not AI-as-writer.

Scenario 2: AI Used for Grammar or Sentence Clarity

Template:

“An AI-based writing assistant (such as Grammarly or ChatGPT) was used to suggest improvements for sentence clarity and grammar. All substantive writing, reasoning, and editing were carried out by the author.”

This covers the most common use case AI as an advanced editor or spellchecker. Declaring it protects you from any appearance of non-disclosure and positions AI as a tool rather than a contributor.

Scenario 3: AI Used to Summarise Research or Identify Citations

Template:

“AI tools were employed to summarise publicly available research papers and to identify relevant citations during the literature review stage. All summaries and references were manually verified by the author before inclusion.”

This is essential if you used Scite.ai, Elicit, Consensus, or ChatGPT to scan literature. The phrase ‘manually verified’ is important it tells the journal that you did not take AI summaries at face value.

Scenario 4: AI Used to Create Visuals or Charts

Template:

“Visual elements in this paper were developed with the assistance of AI-powered design tools. The content, interpretation, and final design decisions were made by the author.”

Some journals particularly in medicine and the social sciences have stricter policies on AI-generated visuals. If in doubt, check the journal’s specific guidance before using AI for figures or infographics.

Scenario 5: No AI Was Used at Any Stage

Template:

“No AI tools were used in the writing, editing, data analysis, or visual design stages of this work.”

Use this only when you are entirely certain. If AI played any role even rephrasing a sentence or checking a grammar point choose one of the above templates instead. A false ‘no AI’ declaration is far more damaging than an honest disclosure of minor use.

What Happens When the Detector Is Still Wrong?

Even after applying all six strategies, there may be cases where a detector still flags your work. If that happens, here is how to respond professionally:

- Request the specific passages flagged – many tools can highlight which sections triggered the classification

- Provide your draft history as evidence – timestamped Google Docs version history is particularly compelling

- Point to the methodological limitations of AI detection tools — there is substantial published research on their high false-positive rates, and citing it in your response is legitimate

- Offer to rewrite the flagged section in the presence of the editor or under timed conditions if the journal is willing — this is an unusual request but demonstrates confidence in your authorship

The key point to communicate is that a detector score is a probability, not a verdict. You are not required to accept a tool’s classification as fact, and most editors will be responsive to a calm, evidence-based rebuttal.

Allow notifications

Allow notifications